|

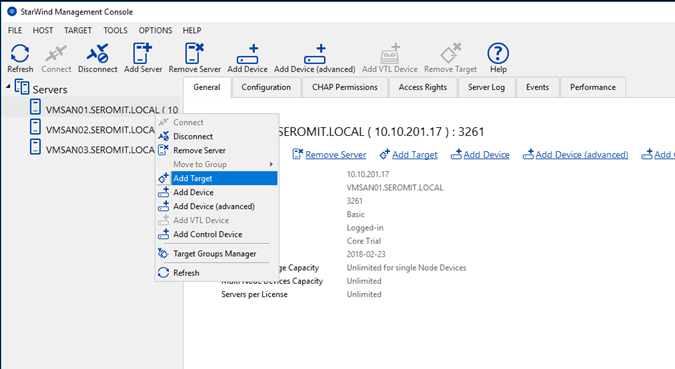

You use mirrored drives to store deferred writes, and if the power goes out, your completely safe. I could also only muster an SSD cache of 20GB because ZFS requires a lot of ram to hold the meta data for the L2 cache, it does have an awesome feature though called an SLOG, it's like an SSD write cache. The performance was great at first but over time degraded.LSFS addresses this by being log structured, which really helps your writes even though there just going onto spinning disks, but your data gets really, really fragmented over time. This let me use my full 120GB SSD as cache and was more stable, although it has no deferred writes, or the type that won’t be lost in a power out anyway, meaning my writes were a little slower. The data are mirrored between two or more nodes.So, I used StarWind Virtual SAN to set up an LSFS drive and likewise presented the data back to my PC as an iscsi target. Always looking for a thrill though, I wanted more.StarWind Virtual SAN is a Software-Defined Storage solution which enables to replicate data across several nodes to ensure availability.It was also not that stable and I suffered occasional BSOD's. But on the down side it flushed the L2 cache on a reboot, which is bad for me as I don’t leave my lab powered overnight.Then I tried VELOSSD and it was a disaster, you see, it did do deferred writes by default. This could still be ok though if I’d gone with a flat file system instead of going with LSFS.

Starwind Lsfs Software Solutions DownI usually have running about 6 VM's and I've experienced very little data churn, at least since my L2 cache finally did a large gather. I don’t mean to run these other software solutions down, they just didn’t do it for me.Primo was next on my list to try, and can i say after week, I'm super impressed, super stable and no other PC issues. My raid file system was damaged beyond repair and would only show up as RAW.Then I tried Diskeeper for a while, but after an update got BSOD's on boot up, whatever I did to the system drive to cache it, windows 10 didn't like anymore, uninstalling it from safe mode fixed it for me, and it least it didn’t cost me data like VELO.

The only time I was ever affected was during one of the Windows 10 rollouts, where they re-enumerated drives and for some reason it didn't play well with the *Beta* version of Primocache I was testing at the time.There are occasions where you might lose the L1/L2 contents, and have to start re-populating the caches with real data (they flush for one reason or another). UPS has covered the hardware well, and system Sleeps/Hibernates and even the occasional bluescreen haven't harmed the drive data. The only problem I had years back that I reported as a bug, was a problem with the Intel RST configuration software, which Romex fixed rather quickly after they had the info.Since then I've never had a situation where enabling the Primocache L1 write-cache has negatively affected me in any way. Never had an issue of any type, even with BSODs. And the last ~3 years of that I've had a UPS on affected hardware, and had the L1 turned on in Primo and configured with long write timers. I hope this whole endeavour works out, sick of the down time between trying different caches.I've been using Primocache since it was called Fancycache (so around 5-6 years now). Backburner softwareTurn on the L1 if you're covered by a UPS and don't have overclocking issues.

0 Comments

Leave a Reply. |

Details

AuthorTony ArchivesCategories |

RSS Feed

RSS Feed